I’ve been having problems deploying a Sql Virtual Machine in Azure lately and decided to perform some tests to get to the bottom of the issue. I’d like to share some strange behaviour.

Problem

If I deploy a new SQL VM using bicep, it deploys fine. If however, I redeploy the same VM the deployment fails with this error:

Ext_AutomatedBackupError

In the SQL Server ERRORLOG on the VM:

2022-03-06 08:24:17.62 Logon Error: 18456, Severity: 14, State: 38.

2022-03-06 08:24:17.62 Logon Login failed for user 'NT Service\SQLIaaSExtensionQuery'. Reason: Failed to open the explicitly specified database 'msdb'. [CLIENT: <local machine>]

2022-03-06 08:24:17.74 spid8s Starting up database 'msdb'.

Delving into the previous ERRORLOG file, ERRORLOG.1 I can see this

2022-03-28 09:13:37.60 spid11s Clearing tempdb database.

2022-03-28 09:13:37.60 spid11s Error: 5123, Severity: 16, State: 1.

2022-03-28 09:13:37.60 spid11s CREATE FILE encountered operating system error 3(The system cannot find the path specified.) while attempting to open or create the physical file 'D:\SQLTemp\Data\tempdb.mdf'.

2022-03-28 09:13:37.65 spid11s Error: 17204, Severity: 16, State: 1.

2022-03-28 09:13:37.65 spid11s FCB::Open failed: Could not open file D:\SQLTemp\Data\tempdb.mdf for file number 1. OS error: 3(The system cannot find the path specified.).

2022-03-28 09:13:37.65 spid11s Error: 5120, Severity: 16, State: 101.

2022-03-28 09:13:37.65 spid11s Unable to open the physical file "D:\SQLTemp\Data\tempdb.mdf". Operating system error 3: "3(The system cannot find the path specified.)".

2022-03-28 09:13:37.65 spid11s Error: 1802, Severity: 16, State: 4.

2022-03-28 09:13:37.65 spid11s CREATE DATABASE failed. Some file names listed could not be created. Check related errors.

2022-03-28 09:13:37.65 spid11s Could not create tempdb. You may not have enough disk space available. Free additional disk space by deleting other files on the tempdb drive and then restart SQL Server. Check for additional errors in the operating system error log that may indicate why the tempdb files could not be initialized.

This suggests that SQL Server is unable to create tempdb.

Machine specs

- Location: UK South

- VM Size: Standard_D2ds_v4

- SqlImageType: sql2019-ws2022

- SqlImageSku: sqldev-gen2

If you want to reproduce the issues I am having I created a repo here. Just clone the repo and change into the repo directory in PowerShell 7, Connect to the Azure subscription you want to deploy to and then run Deploy.ps1.

Initial deploy works fine

Takes 8 minutes to run.

Note

Redeploy without rebooting

Takes 2 minutes to run.

Redeploy after VM deallocated

Strange workaround 1

Restart the SQL Server service and redeploy

Strange workaround 2

Change the VM Size to Standard_D2ds_v4 and redeploy (same as rebooting?)

Microsoft Support

Suggestion 1: Delayed Start

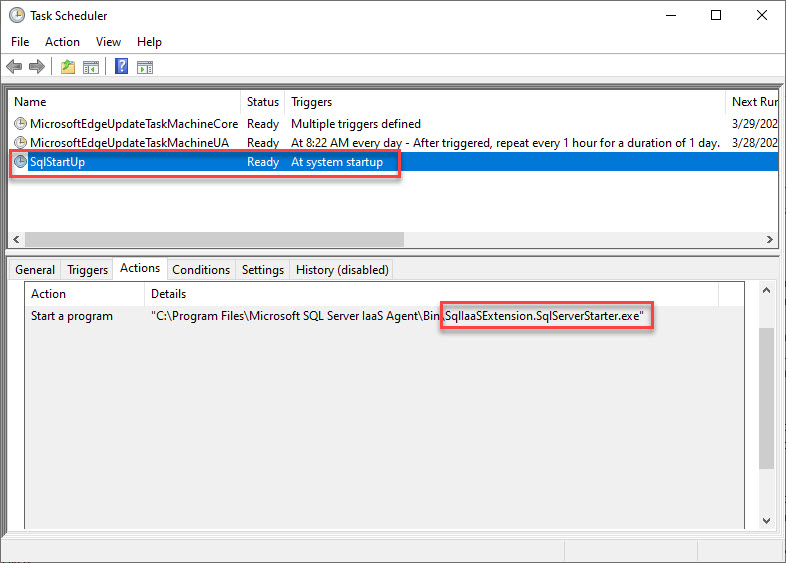

I raised a Support Request with Microsoft and the suggestion that came back was to set the SQL Server service to Delayed Start. This is because there is a program scheduled using the task scheduler to create the D:\SQLTemp\Data and D:\SQLTemp\Log directories on server boot. Looking in Task Scheduler, there is indeed a task called SqlStartUp which creates these tempdb directories.

This is a race condition, where if the SQL Server service wins the race with the SqlStartUp scheduled task, SQL Server will fail to start, because the tempdb directories do not exist.

I tried the Delayed Start suggestion but I get the same error and I suspect that it worked for Microsoft because the race condition just happened to fall on the correct side. However, when I run the deployment I get the same SqlTimeOut error.

Suggestion 2: Manual Start

The next suggestion from Microsoft that seemed to work for them was to use Manual Start for the SQL Server and SQL Server Agent services. However when I tried it, I get the same SqlTimeOut error. Again, classic race condition.

Suggestion 3: Place TempDb on Premium attached storage

Adding another premium disk to the machine and configuring TempDb file to reside on there “solves” the problem. However according to Microsoft’s best practises guide for SQL Server on VMs, TempDb should be placed on the ephemeral local SSD drive (D:\ by default).

Alternative home-grown solution

One solution I am considering, but would rather not, because I am coding around Microsoft, is to set the SQL Server services to Manual start, and run a PowerShell script on startup which creates the TempDb directories, followed by a start of the SQL server services. This should eliminate the race condition.

Conclusion

For now, I will configure the Sql Virtual Machines to use premium attached storage. In my environment it is not too important to have ultra-fast TempDb performance, but I know for some people that is a requirement. I have created a branch called workaround in the repo to show you have I’ve done that. See https://github.com/markallisongit/sqliaas-demo/tree/workaround

Microsoft have escalated the issue to the SQL Product Team, and I’m still waiting for that and will post an update when I hear back.